We trained for 8 epochs on a V100 (approx.

On-the-fly back translation and reconstruction on sentences which acts as translation trainingīelow is the algorithm for our training process:.Denoising autoencoder training on each language which acts as language model training.Discriminator training to constrain encoder to map both languages to the same latent space.

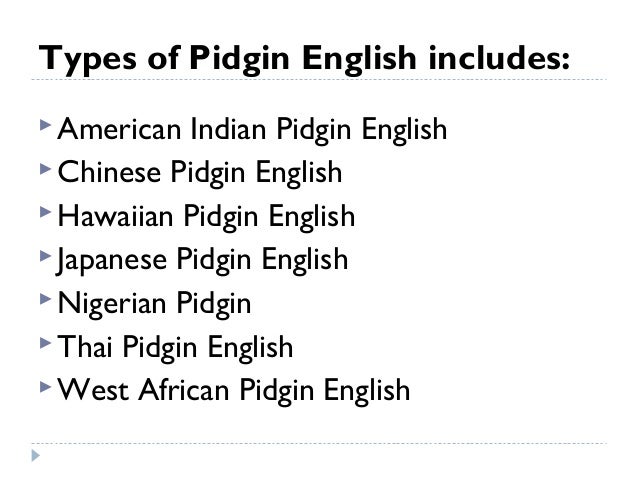

Test set was obtained from the JW300 dataset and preprocessed by the Masakhane group hereįor our results, at each training step, we performed the following: The creation of an Unsupervised Neural Machine Translation model between Pidgin and English which achieves a BLEU score of 7.93 from Pidgin to English and 5.18 from English to Pidgin on a test set of 2101 sentence pairs. This aligned vector will be helpful in the performance of various downstream tasks and transfer of models from English to Pidgin. Significantly better than a baseline of 0.0093 which is the probability of selecting the right nearest neighbor from the evaluation set of 108 pairs. The alignment of Pidgin word vectors with English word vectors which achieves a Nearest Neighbor accuracy of 0.1282. Link to paper - (Accepted at NeurIPS 2019 Workshop on Machine Learning for the Developing World) This repository contains the implementation of an Unsupervised NMT model from West African Pidgin (Creole) to English without using a single parallel sentence during training. Unsupervised Neural Machine Translation from West African Pidgin (Creole) to English

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed